Home>Technology and Computers>AWS Transit Gateway For Connecting To On Premise A Thorough Study

Technology and Computers

AWS Transit Gateway For Connecting To On Premise A Thorough Study

Published: January 23, 2024

Learn how AWS Transit Gateway facilitates seamless connectivity between on-premise data centers and the cloud. Explore the comprehensive study on technology and computers for a deeper understanding.

(Many of the links in this article redirect to a specific reviewed product. Your purchase of these products through affiliate links helps to generate commission for Noodls.com, at no extra cost. Learn more)

Table of Contents

Introduction

AWS Transit Gateway is a powerful networking service that simplifies the connectivity between Amazon Web Services (AWS) and on-premises networks. It acts as a hub that enables seamless communication between various virtual private clouds (VPCs) and on-premises data centers. This innovative solution streamlines network management, reduces operational overhead, and enhances the overall connectivity experience for organizations leveraging AWS infrastructure.

By leveraging AWS Transit Gateway, businesses can establish a centralized gateway to manage network traffic across multiple VPCs and on-premises environments. This centralized approach eliminates the need for complex peering relationships between individual VPCs, allowing for a more efficient and scalable network architecture. As a result, organizations can achieve enhanced network performance, simplified network management, and improved security posture.

In this comprehensive study, we will delve into the intricacies of AWS Transit Gateway, exploring its benefits, setup process, best practices, and real-world applications. By gaining a deeper understanding of this transformative networking solution, businesses can harness its capabilities to optimize their network connectivity, bolster their infrastructure, and drive operational excellence.

What is AWS Transit Gateway?

AWS Transit Gateway is a highly scalable and centralized networking service designed to simplify the connectivity between Amazon Web Services (AWS) and on-premises networks. It serves as a hub that facilitates seamless communication between multiple virtual private clouds (VPCs) and on-premises data centers within a unified and managed infrastructure. This innovative solution fundamentally transforms the way organizations manage their network architecture, offering a host of benefits that streamline operations and enhance connectivity.

At its core, AWS Transit Gateway functions as a single point of connectivity for all network traffic, effectively replacing the traditional method of establishing peering connections between individual VPCs. This centralized approach eliminates the complexities associated with managing multiple connections, allowing organizations to achieve a more efficient and scalable network architecture. By consolidating network traffic through a unified gateway, businesses can significantly reduce operational overhead and simplify network management, ultimately leading to improved productivity and cost savings.

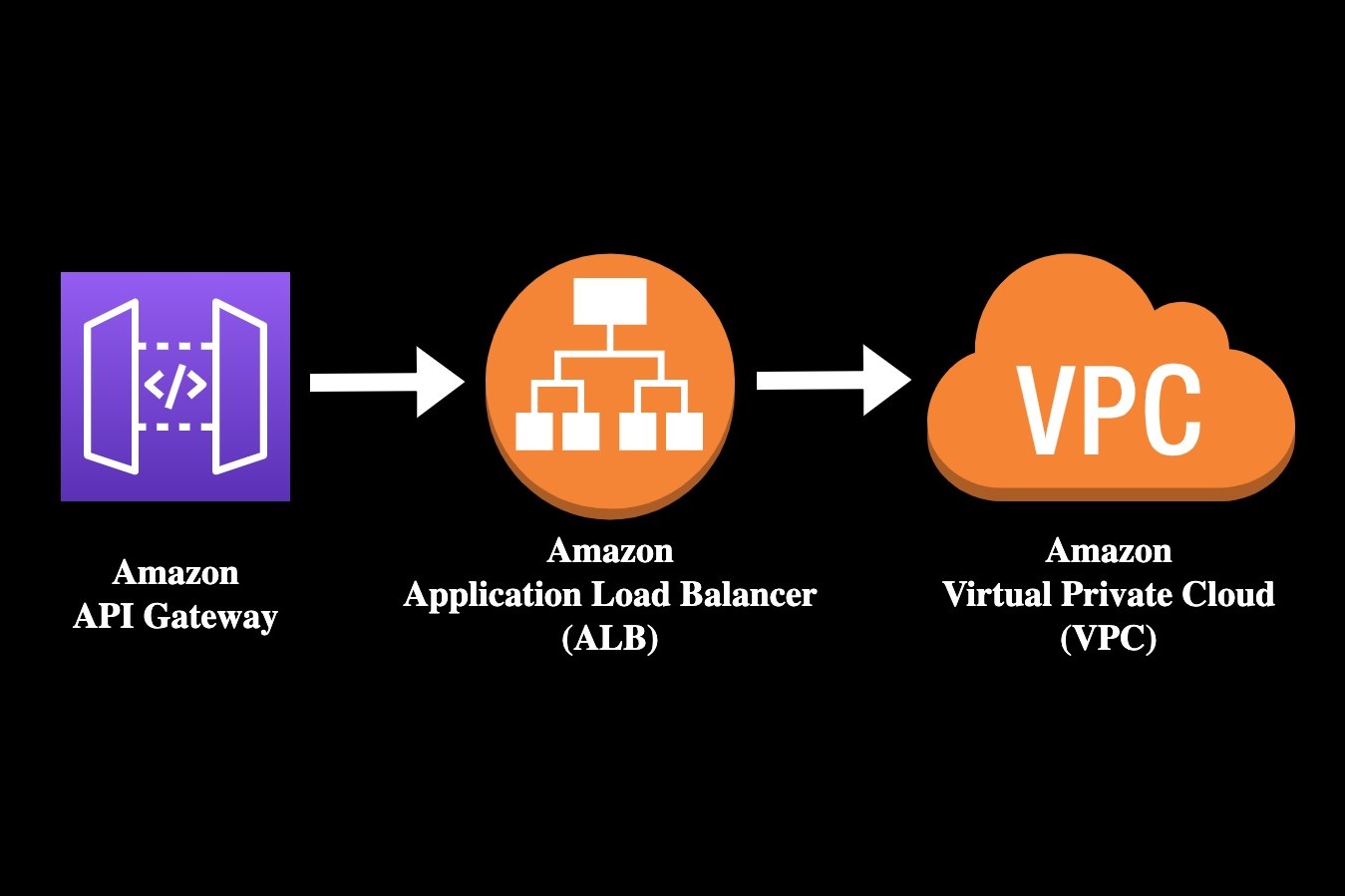

One of the key advantages of AWS Transit Gateway is its ability to seamlessly integrate with on-premises networks, enabling organizations to extend their AWS infrastructure to existing data centers with minimal effort. This integration is facilitated through the use of VPN and AWS Direct Connect, providing a secure and reliable means of connecting on-premises environments to the AWS cloud. As a result, businesses can leverage the full potential of AWS services while maintaining seamless connectivity with their on-premises resources, fostering a cohesive and integrated network environment.

Furthermore, AWS Transit Gateway supports the propagation of route tables across connected VPCs and on-premises networks, allowing for consistent and efficient routing of network traffic. This capability ensures that traffic is directed to the appropriate destinations, optimizing network performance and enhancing overall connectivity. Additionally, the centralized nature of AWS Transit Gateway simplifies the implementation of network policies and controls, enabling organizations to enforce consistent security measures and access controls across their entire network infrastructure.

In essence, AWS Transit Gateway represents a paradigm shift in network connectivity, offering a unified and scalable solution that empowers organizations to seamlessly integrate their AWS and on-premises environments. By leveraging this innovative networking service, businesses can achieve enhanced network performance, streamlined management, and improved security, laying the foundation for a robust and agile infrastructure that meets the evolving demands of modern enterprises.

Benefits of using AWS Transit Gateway for connecting to on-premise

AWS Transit Gateway offers a myriad of benefits for organizations seeking to establish seamless connectivity between their AWS infrastructure and on-premises networks. By leveraging this innovative networking solution, businesses can unlock a host of advantages that streamline operations, enhance network performance, and bolster overall connectivity.

Simplified Network Management

AWS Transit Gateway simplifies network management by serving as a centralized hub for routing and managing network traffic. This eliminates the need for complex peering relationships between individual VPCs, enabling organizations to consolidate their network connectivity within a unified infrastructure. As a result, administrators can efficiently manage network policies, access controls, and routing configurations from a centralized location, reducing operational overhead and enhancing overall network visibility.

Scalability and Flexibility

The scalability of AWS Transit Gateway allows organizations to seamlessly scale their network infrastructure as their business grows. With support for up to 5,000 VPC attachments, businesses can easily expand their network footprint without the constraints of traditional peering connections. This flexibility empowers organizations to adapt to evolving business requirements, ensuring that their network architecture remains agile and responsive to changing demands.

Enhanced Network Performance

By leveraging AWS Transit Gateway, organizations can achieve enhanced network performance through optimized routing and efficient traffic management. The centralized nature of the gateway facilitates the propagation of route tables across connected VPCs and on-premises networks, ensuring that network traffic is directed along the most efficient paths. This results in improved latency, reduced network congestion, and overall enhanced performance for applications and services running on the network.

Cost Savings

AWS Transit Gateway offers cost savings by reducing the operational complexities associated with managing network connectivity. By consolidating network traffic through a centralized gateway, organizations can minimize data transfer costs and operational overhead, leading to potential cost savings. Additionally, the scalability and flexibility of AWS Transit Gateway enable businesses to optimize their network infrastructure without incurring significant capital expenses, further contributing to cost efficiency.

Seamless Integration with On-Premises Networks

AWS Transit Gateway seamlessly integrates with on-premises networks, providing a secure and reliable means of extending AWS infrastructure to existing data centers. This integration is facilitated through VPN and AWS Direct Connect, enabling organizations to maintain seamless connectivity between their on-premises resources and the AWS cloud. As a result, businesses can leverage the full potential of AWS services while maintaining a cohesive and integrated network environment.

In summary, the benefits of using AWS Transit Gateway for connecting to on-premise networks are far-reaching, encompassing simplified network management, scalability, enhanced performance, cost savings, and seamless integration with on-premises environments. By harnessing these advantages, organizations can optimize their network connectivity, drive operational efficiency, and lay the groundwork for a robust and agile infrastructure that meets the evolving demands of modern enterprises.

Setting up AWS Transit Gateway for connecting to on-premise

Setting up AWS Transit Gateway for connecting to on-premise networks involves a series of well-defined steps to establish seamless connectivity and enable efficient communication between AWS infrastructure and on-premises environments. This process encompasses the configuration of transit gateway attachments, route propagation, and the integration of VPN or AWS Direct Connect for secure connectivity. Here’s a detailed overview of the essential steps involved in setting up AWS Transit Gateway for connecting to on-premise networks:

1. Create a Transit Gateway

The first step in setting up AWS Transit Gateway is to create a transit gateway within the AWS Management Console. This involves defining the basic attributes of the transit gateway, such as its name, description, and the Amazon Virtual Private Clouds (VPCs) that will be associated with it. Additionally, administrators can configure route tables and propagation settings to facilitate the seamless routing of network traffic.

2. Attach VPCs and VPN Connections

Once the transit gateway is created, the next step is to attach the relevant VPCs to the transit gateway. This allows the VPCs to communicate with each other and with on-premises networks through the transit gateway. Additionally, administrators can establish VPN connections to enable secure communication between the transit gateway and on-premises environments. Alternatively, AWS Direct Connect can be configured to provide dedicated and high-speed connectivity between the transit gateway and on-premises data centers.

3. Configure Route Propagation

Route propagation is a critical aspect of setting up AWS Transit Gateway for connecting to on-premise networks. Administrators can configure route tables to propagate routes between the transit gateway and connected VPCs, enabling efficient routing of network traffic. By propagating routes, organizations can ensure that network traffic is directed along the most optimal paths, enhancing network performance and connectivity.

4. Implement Network Policies and Controls

To enforce consistent security measures and access controls, administrators can implement network policies within the transit gateway. This involves defining security groups, network ACLs, and other relevant controls to govern the flow of traffic between connected VPCs and on-premises networks. By implementing robust network policies, organizations can bolster the security posture of their network infrastructure and mitigate potential threats.

Read more: Ruben Patterson NBA Transition Nbrpa

5. Testing and Validation

Once the configuration is complete, it is essential to conduct thorough testing and validation to ensure that the connectivity between AWS Transit Gateway and on-premise networks is functioning as intended. This involves testing network connectivity, verifying route propagation, and validating the secure communication channels established through VPN or AWS Direct Connect.

By following these steps, organizations can effectively set up AWS Transit Gateway for connecting to on-premise networks, establishing a robust and seamless network infrastructure that fosters efficient communication and connectivity between AWS and on-premises environments.

Best practices for using AWS Transit Gateway

When leveraging AWS Transit Gateway for connecting to on-premise networks, it is essential to adhere to best practices to optimize network performance, enhance security, and streamline network management. By following these best practices, organizations can maximize the benefits of AWS Transit Gateway and establish a robust and efficient network infrastructure. Here are the key best practices for using AWS Transit Gateway:

-

Centralized Network Architecture: Embrace the centralized nature of AWS Transit Gateway to consolidate network connectivity and simplify network management. By centralizing routing and connectivity, organizations can streamline operations, reduce complexity, and gain better visibility into network traffic.

-

Scalable Attachments: Ensure that the transit gateway attachments are designed for scalability, allowing for seamless expansion of network resources as the business grows. By planning for scalability from the outset, organizations can avoid potential bottlenecks and accommodate future network growth without disruptions.

-

Route Table Management: Implement effective route table management practices to propagate routes efficiently across connected VPCs and on-premise networks. Regularly review and update route tables to optimize network traffic flow and ensure that traffic is directed along the most efficient paths.

-

Security Controls: Enforce robust security controls within the transit gateway to safeguard network traffic and mitigate potential threats. Implement granular security groups, network ACLs, and encryption protocols to protect data in transit and maintain a secure network environment.

-

Monitoring and Logging: Utilize AWS monitoring and logging tools to gain insights into network performance, traffic patterns, and security events. By monitoring network activity and logging relevant data, organizations can proactively identify and address potential issues, ensuring the overall health and security of the network.

-

High Availability and Redundancy: Design the transit gateway architecture with high availability and redundancy in mind to minimize the risk of network disruptions. Implement redundant connections and failover mechanisms to ensure continuous network connectivity and resilience against potential failures.

-

Compliance and Governance: Align the use of AWS Transit Gateway with industry-specific compliance requirements and governance standards. Ensure that network configurations and policies adhere to regulatory guidelines and internal governance frameworks to maintain compliance and data security.

By incorporating these best practices into the deployment and management of AWS Transit Gateway, organizations can optimize network connectivity, enhance security, and streamline network operations. This proactive approach enables businesses to harness the full potential of AWS Transit Gateway, fostering a robust and agile network infrastructure that meets the evolving demands of modern enterprises.

Case studies of companies using AWS Transit Gateway for connecting to on-premise

Company A: Streamlining Network Connectivity

Company A, a leading technology firm, sought to enhance the connectivity between its AWS infrastructure and on-premise data centers to support its growing portfolio of cloud-based services. By implementing AWS Transit Gateway, Company A was able to streamline network connectivity across multiple VPCs and on-premise environments, consolidating network management and improving overall network performance.

With AWS Transit Gateway, Company A achieved a centralized and scalable network architecture, allowing for seamless communication between its AWS resources and on-premise data centers. The simplified network management capabilities of AWS Transit Gateway enabled Company A to efficiently manage routing and connectivity, reducing operational overhead and enhancing network visibility.

Furthermore, the integration of VPN connections facilitated secure communication between the transit gateway and on-premise environments, ensuring that data transfer between AWS and on-premise resources remained protected. This seamless integration empowered Company A to leverage the full potential of AWS services while maintaining a cohesive and secure network environment.

As a result of implementing AWS Transit Gateway, Company A experienced improved network performance, enhanced scalability, and streamlined network operations. The centralized approach to network connectivity provided Company A with the agility and flexibility to adapt to evolving business requirements, laying the foundation for a robust and efficient network infrastructure.

Company B: Optimizing Network Security and Compliance

Company B, a global financial services provider, faced the challenge of establishing secure and compliant connectivity between its AWS infrastructure and on-premise data centers. By leveraging AWS Transit Gateway, Company B was able to optimize network security and compliance while maintaining seamless connectivity across its network environments.

AWS Transit Gateway allowed Company B to enforce robust security controls, including granular security groups and network ACLs, to safeguard network traffic and protect sensitive financial data. The centralized management of network policies facilitated consistent security measures across connected VPCs and on-premise networks, ensuring compliance with industry-specific regulatory requirements.

Additionally, the integration of AWS Direct Connect provided Company B with dedicated and high-speed connectivity between the transit gateway and on-premise data centers, further enhancing the security and reliability of network communication.

By implementing AWS Transit Gateway, Company B achieved a secure and compliant network infrastructure that met the stringent regulatory standards of the financial services industry. The centralized approach to network connectivity, coupled with robust security measures, enabled Company B to optimize network security and compliance while maintaining seamless connectivity between its AWS and on-premise environments.

These case studies exemplify the diverse applications of AWS Transit Gateway in real-world scenarios, showcasing its ability to streamline network connectivity, enhance security, and facilitate seamless integration between AWS and on-premise environments. Through the adoption of AWS Transit Gateway, organizations can achieve a robust and agile network infrastructure that meets the evolving demands of modern enterprises.

Conclusion

In conclusion, AWS Transit Gateway represents a transformative networking solution that empowers organizations to establish seamless connectivity between their AWS infrastructure and on-premise networks. By centralizing network management, enhancing scalability, optimizing performance, and bolstering security, AWS Transit Gateway offers a comprehensive framework for building a robust and efficient network infrastructure.

The benefits of using AWS Transit Gateway for connecting to on-premise networks are far-reaching. From simplifying network management and reducing operational overhead to enabling seamless integration with on-premise environments, AWS Transit Gateway provides a unified platform for organizations to streamline their network architecture and drive operational excellence.

Furthermore, the best practices for using AWS Transit Gateway underscore the importance of scalability, security, and compliance, guiding organizations to optimize their network connectivity and maintain a secure and resilient infrastructure. By adhering to these best practices, businesses can harness the full potential of AWS Transit Gateway and lay the groundwork for a network environment that meets the evolving demands of modern enterprises.

The case studies of companies leveraging AWS Transit Gateway exemplify its diverse applications in real-world scenarios, showcasing its ability to streamline network connectivity, enhance security, and facilitate seamless integration between AWS and on-premise environments. These examples underscore the transformative impact of AWS Transit Gateway on network architecture and connectivity, demonstrating its value across various industries and use cases.

In essence, AWS Transit Gateway offers a paradigm shift in network connectivity, providing organizations with a centralized, scalable, and secure platform to manage their network infrastructure. By embracing AWS Transit Gateway, businesses can optimize their network performance, enhance security, and streamline network operations, ultimately driving efficiency and agility in their network architecture.

As organizations continue to embrace cloud technologies and expand their network footprint, AWS Transit Gateway stands as a foundational solution for establishing seamless connectivity between AWS and on-premise environments. By leveraging the capabilities of AWS Transit Gateway and adhering to best practices, businesses can build a resilient and agile network infrastructure that supports their growth and innovation in the digital era.